Runway ML has become one of the most widely recognized platforms in the generative video space. The platform allows creators to generate video clips using text prompts, transform images into animated sequences, and edit videos with AI powered tools. Runway’s Gen-2 model introduced a workflow where a simple description can become a short cinematic video, which helped the platform gain strong adoption among filmmakers, marketers, and social media creators.

However, many creators eventually begin exploring other tools. One reason is that rendering limits and credit based pricing can restrict experimentation when users want to generate many variations of a scene. Some creators also look for tools that focus more on specific tasks such as stylized animation, avatar based videos, or faster social media content creation.

Another reason is creative flexibility. Generative video models behave differently depending on their training data and design goals. Some tools specialize in photorealistic footage while others produce artistic animation styles. Because of these differences, many professionals test several platforms to determine which system fits their workflow best.

The following tools represent some of the closest alternatives to Runway ML because they provide similar capabilities in areas such as text to video generation, image to video animation, and AI assisted video creation.

Pika Labs is one of the fastest growing AI video generation tools in the market. The platform allows users to generate short animated clips using simple text prompts or existing images. After entering a prompt, the system creates a short video that attempts to interpret the description visually. Many creators use it to quickly test scene ideas before moving into more advanced editing tools.(https://pika.art/login)

One of the reasons Pika Labs has gained popularity is its speed. Compared with several generative video models, the rendering process tends to be relatively fast. This makes the platform useful for experimentation because users can generate multiple clips quickly and choose the best result.

While Runway ML provides deeper editing controls, Pika focuses more on the generation stage of video creation. The system is designed for producing short clips rather than managing complex editing timelines.

Highlights

1. Pika Labs allows users to generate short videos directly from text prompts, which makes it similar to Runway’s generative video workflow.

2. The platform also supports animating still images, allowing creators to transform static visuals into moving clips.

3. One of its biggest strengths is fast rendering speed, which helps creators experiment with multiple visual ideas quickly.

4. Compared with Runway ML, the platform offers fewer editing tools and does not provide advanced timeline based video editing features.

5. Pika Labs offers a limited free tier while paid plans increase generation limits and allow higher resolution video exports.

6. The platform works best for storyboarding scenes, generating short cinematic clips, and testing visual concepts before full production.

Kaiber approaches generative video from a more artistic perspective. Instead of focusing only on text prompts, the platform encourages users to start with an image or reference frame. That image is then animated using AI models that create motion, transitions, and stylistic transformations.(https://kaibarai.com/)

This workflow makes Kaiber popular among musicians and digital artists who want to generate animated visuals for music videos or promotional content. Many users create short looping animations or stylized sequences that are designed for social media.

Compared with Runway ML, Kaiber focuses less on photorealistic video generation and more on artistic transformation. The results often resemble animated or surreal visuals rather than cinematic footage.

Highlights

1. Kaiber allows creators to transform static images into animated sequences using AI driven motion generation.

2. The platform also supports prompt based visual transformations that can create stylized video clips from text descriptions.

3. One of its strongest advantages is the ability to produce artistic and experimental visuals that work well for music videos.

4. Compared with Runway ML, Kaiber provides fewer controls for detailed scene editing after the video has been generated.

5. The platform uses a credit based pricing system where higher plans allow longer video generation and higher export quality.

6. Kaiber is particularly useful for music videos, artistic visual projects, and stylized social media animations.

Luma AI has gained attention for its work in neural rendering and 3D scene generation. While many AI video tools focus only on prompt based generation, Luma explores a different direction by using neural networks to reconstruct scenes in three dimensions. This technology allows users to capture real world environments and convert them into digital scenes that can later be animated.(https://lumalabs.ai/create/ai-video-generator)

The platform has also introduced generative video capabilities that allow users to produce short clips from prompts or reference images. Because of its focus on realism and spatial depth, Luma often produces videos that feel more grounded than many purely prompt based generators.

Compared with Runway ML, Luma AI is particularly strong in areas related to environment reconstruction and 3D scene capture.

Highlights

1. Luma AI allows creators to generate videos from prompts while also supporting advanced neural rendering technology.

2. The platform can reconstruct real world scenes using neural networks, which creates highly detailed digital environments.

3. One of its major strengths is realistic lighting and spatial depth in generated scenes.

4. Compared with Runway ML, the platform currently provides fewer built in editing tools for refining generated clips.

5. Pricing typically depends on generation credits and usage limits depending on the specific service plan.

6. Luma AI is well suited for filmmakers, 3D artists, and creators who want realistic environments in AI generated video.

Synthesia focuses on a different segment of AI video generation. Instead of producing cinematic scenes from prompts, the platform generates videos featuring AI avatars that speak scripted text. This technology is widely used by businesses that want to create training videos, marketing explainers, or corporate presentations.(https://www.synthesia.io/)

Users write a script, choose an AI presenter, and the system generates a video where the avatar speaks the text in a realistic voice. Although this is different from Runway’s cinematic video models, Synthesia still belongs to the generative video category because the entire video is created by AI.

Compared with Runway ML, Synthesia is much more structured and focused on professional communication rather than experimental video generation.

Highlights

1. Synthesia allows users to generate complete videos using AI avatars that speak scripted text.

2. The platform supports many languages and voice options, which makes it useful for global business communication.

3. One of its strongest advantages is the ability to produce training videos and product explainers quickly without filming actors.

4. Compared with Runway ML, Synthesia cannot generate cinematic scenes from prompts or animate images into complex visuals.

5. Pricing is typically subscription based, with higher plans allowing more video minutes and additional avatar options.

6. The platform is best suited for corporate training, marketing explainers, and educational content.

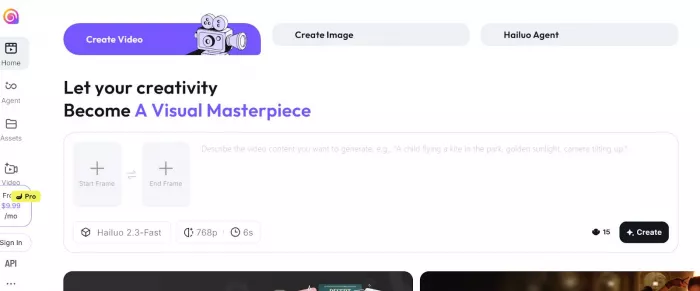

Hailuo AI is another generative video platform that has gained attention for text to video capabilities. The system allows users to describe a scene and generate a short video clip that attempts to visualize that description. Many early users compare it with Runway because both tools focus on prompt driven video creation.(https://hailuoai.video/)

One aspect that stands out is the system’s ability to generate dynamic motion and cinematic camera movements from text prompts. This makes it useful for testing visual ideas before filming.

However, the platform is still developing compared with more mature tools.

Highlights

1. Hailuo AI allows users to generate short video clips directly from text prompts.

2. The platform focuses on cinematic motion generation including camera movement and scene transitions.

3. One advantage is that it can produce visually dynamic clips from simple descriptions.

4. Compared with Runway ML, the ecosystem of editing tools and integrations is still relatively limited.

5. Pricing structures vary depending on platform access and generation credits.

6. Hailuo AI is most useful for experimenting with prompt based cinematic scene creation.

Stable Video Diffusion is an open model developed by Stability AI. Instead of being a single platform, it represents a generative model that developers and creators can run locally or integrate into other tools.(https://stability.ai/)

The model allows users to generate short videos from images or prompts using diffusion based AI technology. Because it is open source, many developers experiment with custom workflows that extend its capabilities.

Compared with Runway ML, Stable Video Diffusion offers more flexibility for developers but requires technical setup.

Highlights

1. Stable Video Diffusion allows users to generate video clips from images using diffusion based AI models.

2. The model can be integrated into custom software or run locally on powerful hardware.

3. One advantage is the flexibility that comes with open source AI development.

4. Compared with Runway ML, the setup process is more technical and requires stronger computing resources.

5. Pricing depends on computing costs rather than a traditional subscription model.

6. The model is best suited for developers, researchers, and advanced creators experimenting with generative video systems.

The rapid development of generative video technology means creators no longer rely on a single platform. While Runway ML remains one of the most advanced tools for prompt-based video creation and AI editing, alternatives such as Pika Labs, Kaiber, Luma AI, Synthesia, Hailuo AI, and Stable Video Diffusion offer different strengths depending on the workflow. Some prioritize cinematic generation, others focus on artistic animation, avatar videos, or open AI models for developers. For creators experimenting with AI video production, testing multiple tools often leads to better results because each platform approaches generative video in a slightly different way. Choosing the right tool ultimately depends on whether the goal is filmmaking, social media content, business videos, or experimental visual projects.

Discussion